Kubernetes v1.14.0环境搭建

- 1、官网下载二进制包

- 2、上传到服务器

- 3、规划好目录

- 4、安装Etcd3依赖环境

- 5、部署管理节点

- 6、新建服务配置文件

- A、kube-apiserver

- B、kube-scheduler

- C、kube-controller-manager

- 7、生成CA证书

- 8、启动服务

- 9、部署工作节点

- 10、新增服务配置文件

- A、kubelet

- B、proxy

- 11、启动服务

- 12、dashboard安装

- 13、创建yaml文件并部署

- 14、常用命令汇总

本文需要大家掌握一定的linux操作系统及docker容器的基础知识,并且把docker容器已经安装配置完成了,如docker没安装的参考之前的博文docker安装。

kubernetes是谷歌开源的一个容器编排引擎,它支持自动化部署、大规模可伸缩、应用容器化管理。在生产环境中部署一个应用程序时,通常要部署该应用的多个实例以便对应用请求进行负载均衡。

下面的搭建步骤是基于当前最新版kubernates v1.14.0

安装部署步骤中所需要编写的配置及服务文件,汇总下载:kubernete相关配置文件.rar

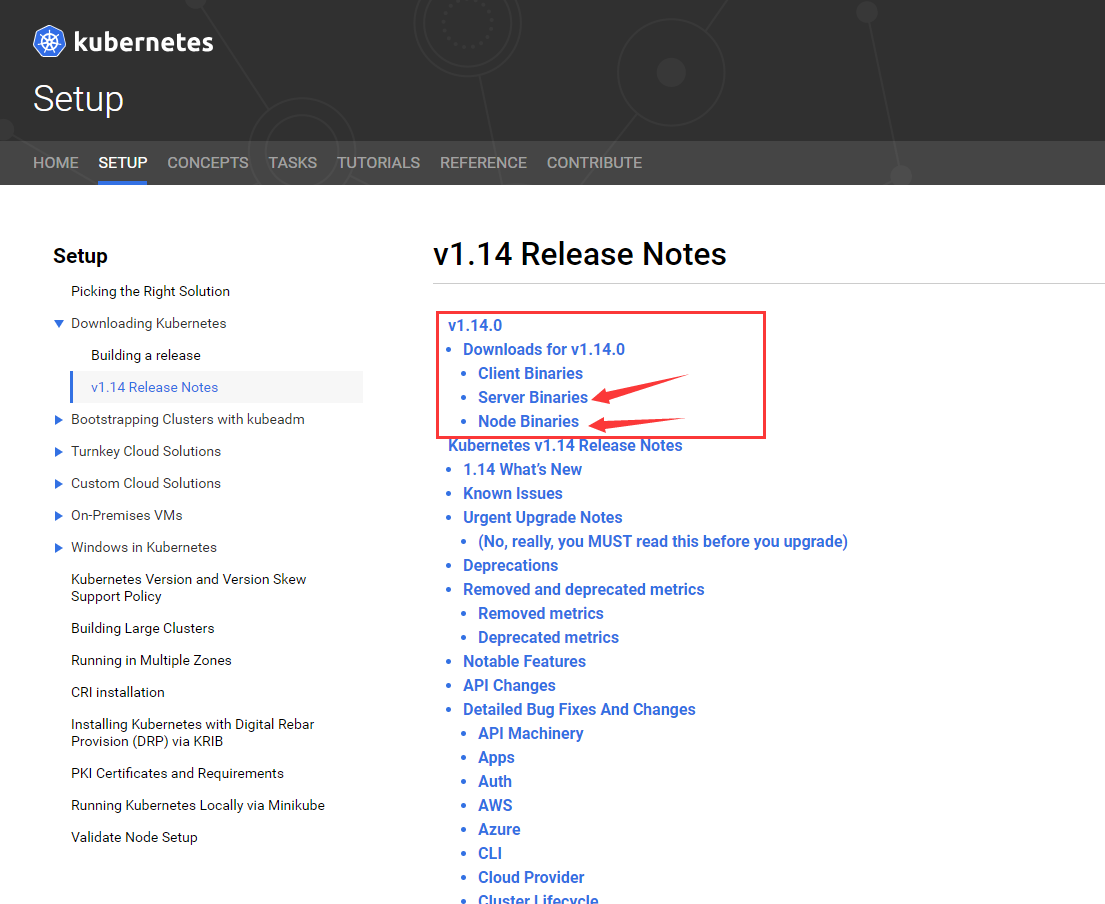

1、官网下载二进制包

官网下载地址:https://kubernetes.io/docs/setup/release/notes/

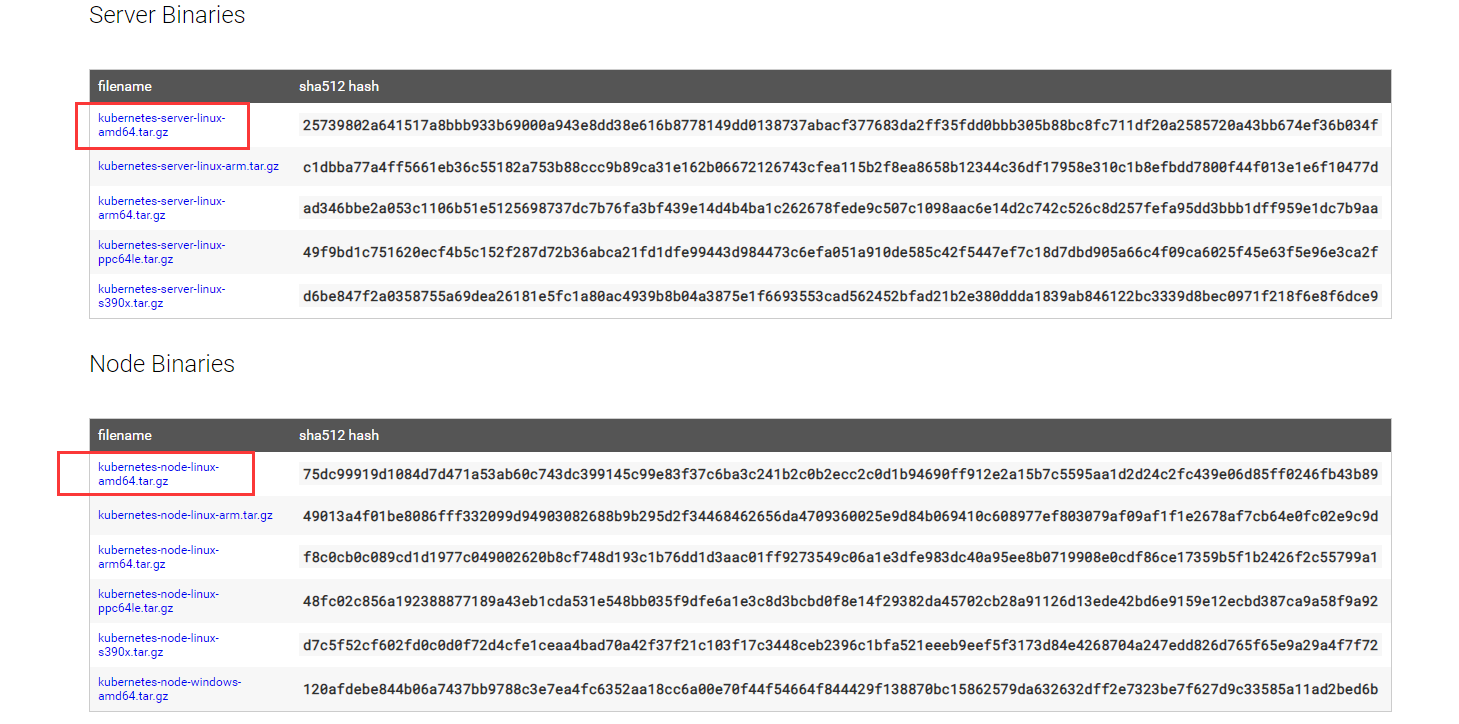

我们只需要下载Server即可,Server是管理节点,里面包含了Node和Client的内容

根据自身操作系统选择对应的二进制包,我使用的是CentOS就直接下载第一个就可以了。

2、上传到服务器

# cd /home

# tar xzf kubernetes-server-linux-amd64.tar.gz

解压后,得到一系列的可执行文件,接下来我们就需要将可执行文件创建成系统服务,并增加相应的配置参数。

3、规划好目录

比如我们将Kubernetes相关的运行文件,配置文件,证书等统一放在/mnt/kubernetes目录下

# mkdir -p /mnt/kubernetes/{bin,cfg,pki}

# mv /home/kubernetes/server/bin/{kube-apiserver,kube-scheduler,kube-controller-manager,kubectl} /mnt/kubernetes/bin

上面命令将管理节点所需要的执行文件复制到我们规划的/mnt/kubernetes/bin目录中去

4、安装Etcd3依赖环境

直接在线安装:

# yum install etcd -y

安装完成后,如果只部署单节点的etcd,则直接启动etcd服务就可以了。

# systemctl start etcd

如果我们是在生产环境上部署,一般是要部署一个etcd集群的。集群的部署非常简单,在多台服务器上都执行上面的安装命令。只需要在每台服务器上修改以下配置文件:

# vi /etc/etcd/etcd.conf

修改以下内容

ETCD_NAME=node1

ETCD_DATA_DIR="/var/lib/etcd/node1.etcd"

ETCD_LISTEN_PEER_URLS="http://192.168.3.34:2380"

ETCD_LISTEN_CLIENT_URLS="http://192.168.3.34:2379"

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.3.34:2380"

ETCD_ADVERTISE_CLIENT_URLS="http://192.168.3.34:2379"

ETCD_INITIAL_CLUSTER="node1=http://192.168.3.34:2380,node2=http://192.168.3.35:2380,node3=http://192.168.3.36:2380"

说明:主要是修改配置文件中的ip和别名,例如节点1机器取名为node1,ip为34,节点2机器同样修改配置为node2和ip为35

配置完成后,分别在三台服务器上启动etcd服务就可以了

5、部署管理节点

管理节点需要运行三个服务:

kube-apiserver

kube-scheduler

kube-controller-manager

6、新建服务配置文件

我们需要为每个服务增加一个配置文件:

A、kube-apiserver

创建配置文件

# vi /mnt/kubernetes/cfg/kube-apiserver

内容如下:

# 启用日志标准错误

KUBE_LOGTOSTDERR="--logtostderr=true"

# 日志级别

KUBE_LOG_LEVEL="--v=4"

# Etcd服务地址

KUBE_ETCD_SERVERS="--etcd-servers=http://127.0.0.1:2379"

# API服务监听地址

KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0"

# API服务监听端口

KUBE_API_PORT="--insecure-port=8080"

# 对集群中成员提供API服务地址

KUBE_ADVERTISE_ADDR="--advertise-address=0.0.0.0"

# 允许容器请求特权模式,默认false

KUBE_ALLOW_PRIV="--allow-privileged=true"

# 集群分配的IP范围

KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.20.0.1/24"

#证书文件

KUBE_API_ARGS1="--client-ca-file=/mnt/kubernetes/pki/ca.crt"

KUBE_API_ARGS2="--tls-cert-file=/mnt/kubernetes/pki/server.crt"

KUBE_API_ARGS3="--tls-private-key-file=/mnt/kubernetes/pki/server.key"

KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,ServiceAccount,ResourceQuota"

创建系统服务文件

# vi /lib/systemd/system/kube-apiserver.service

内容如下:

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/mnt/kubernetes/cfg/kube-apiserver

ExecStart=/mnt/kubernetes/bin/kube-apiserver\

${KUBE_LOGTOSTDERR}\

${KUBE_LOG_LEVEL}\

${KUBE_ETCD_SERVERS}\

${KUBE_API_ADDRESS}\

${KUBE_API_PORT}\

${KUBE_ADVERTISE_ADDR}\

${KUBE_ALLOW_PRIV}\

${KUBE_SERVICE_ADDRESSES}\

${KUBE_ADMISSION_CONTROL}\

${KUBE_API_ARGS1}\

${KUBE_API_ARGS2}\

${KUBE_API_ARGS3}

Restart=on-failure

[Install]

WantedBy=multi-user.target

B、kube-scheduler

创建配置文件:

# vi /mnt/kubernetes/cfg/kube-scheduler

内容如下:

KUBE_LOGTOSTDERR="--logtostderr=true"

KUBE_LOG_LEVEL="--v=4"

KUBE_MASTER="--master=127.0.0.1:8080"

KUBE_LEADER_ELECT="--leader-elect"

创建系统服务

# vi /lib/systemd/system/kube-scheduler.service

内容如下:

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/mnt/kubernetes/cfg/kube-scheduler

ExecStart=/mnt/kubernetes/bin/kube-scheduler\

${KUBE_LOGTOSTDERR}\

${KUBE_LOG_LEVEL}\

${KUBE_MASTER}\

${KUBE_LEADER_ELECT}

Restart=on-failure

[Install]

WantedBy=multi-user.target

C、kube-controller-manager

创建配置文件:

# vi /mnt/kubernetes/cfg/kube-controller-manager

内容如下:

KUBE_LOGTOSTDERR="--logtostderr=true"

KUBE_LOG_LEVEL="--v=4"

KUBE_MASTER="--master=127.0.0.1:8080"

KUBE_CONTROLLER_MANAGER_ARGS1="--service-account-private-key-file=/mnt/kubernetes/pki/server.key"

KUBE_CONTROLLER_MANAGER_ARGS2="--root-ca-file=/mnt/kubernetes/pki/ca.crt"

创建系统服务:

# vi /lib/systemd/system/kube-controller-manager.service

内容如下:

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/mnt/kubernetes/cfg/kube-controller-manager

ExecStart=/mnt/kubernetes/bin/kube-controller-manager\

${KUBE_LOGTOSTDERR}\

${KUBE_LOG_LEVEL}\

${KUBE_MASTER}\

${KUBE_LEADER_ELECT}\

${KUBE_CONTROLLER_MANAGER_ARGS1}\

${KUBE_CONTROLLER_MANAGER_ARGS2}

Restart=on-failure

[Install]

WantedBy=multi-user.target

7、生成CA证书

下载easyrsa3

# cd /home

# curl -L -O https://storage.googleapis.com/kubernetes-release/easy-rsa/easy-rsa.tar.gz

# tar xzf easy-rsa.tar.gz

# cd easy-rsa-master/easyrsa3

# ./easyrsa init-pki

# ./easyrsa --batch "--req-cn=10.20.0.1@`date +%s`" build-ca nopass

# ./easyrsa --subject-alt-name="IP: 10.20.0.1" build-server-full server nopass

# cp pki/ca.crt pki/issued/server.crt pki/private/server.key /mnt/kubernetes/pki/

8、启动服务

# systemctl daemon-reload

# systemctl restart etcd

# systemctl restart kube-apiserver

# systemctl restart kube-controller-manager

# systemctl restart kube-scheduler

注意启动顺序。先保证etcd集群启动正常,再启动kube-apiserver,然后启动剩下两个服务

9、部署工作节点

# cd /home

# mv /home/kubernetes/server/bin/{kubelet,kube-proxy} /mnt/kubernetes/bin/

10、新增服务配置文件

A、kubelet

创建配置文件

# vi /mnt/kubernetes/cfg/kubelet.kubeconfig

内容如下

apiVersion: v1

kind: Config

clusters:

- cluster:

server: http://127.0.0.1:8080

name: local

contexts:

- context:

cluster: local

name: local

current-context: local

创建配置文件

vi /mnt/kubernetes/cfg/kubelet

内容如下

# 启用日志标准错误

KUBE_LOGTOSTDERR="--logtostderr=true"

# 日志级别

KUBE_LOG_LEVEL="--v=4"

# Kubelet服务IP地址

NODE_ADDRESS="--address=0.0.0.0"

# Kubelet服务端口

NODE_PORT="--port=10250"

# 自定义节点名称

NODE_HOSTNAME="--hostname-override=127.0.0.1"

# kubeconfig路径,指定连接API服务器

KUBELET_KUBECONFIG="--kubeconfig=/mnt/kubernetes/cfg/kubelet.kubeconfig"

# 允许容器请求特权模式,默认false

KUBE_ALLOW_PRIV="--allow-privileged=true"

# DNS信息

KUBELET_DNS_IP="--cluster-dns=172.17.0.1"

KUBELET_DNS_DOMAIN="--cluster-domain=cluster.local"

# 禁用使用Swap

KUBELET_SWAP="--fail-swap-on=false"

创建服务文件

# vi /lib/systemd/system/kubelet.service

内容如下

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=-/mnt/kubernetes/cfg/kubelet

ExecStart=/mnt/kubernetes/bin/kubelet\

${KUBE_LOGTOSTDERR}\

${KUBE_LOG_LEVEL}\

${NODE_ADDRESS}\

${NODE_PORT}\

${NODE_HOSTNAME}\

${KUBELET_KUBECONFIG}\

${KUBE_ALLOW_PRIV}\

${KUBELET_DNS_IP}\

${KUBELET_DNS_DOMAIN}\

${KUBELET_SWAP}

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

B、proxy

创建配置文件

# vi /mnt/kubernetes/cfg/kube-proxy

内容如下

# 启用日志标准错误

KUBE_LOGTOSTDERR="--logtostderr=true"

# 日志级别

KUBE_LOG_LEVEL="--v=4"

# 自定义节点名称

NODE_HOSTNAME="--hostname-override=127.0.0.1"

# API服务地址

KUBE_MASTER="--master=http://127.0.0.1:8080"

创建服务文件

# vi /lib/systemd/system/kube-proxy.service

内容如下

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/mnt/kubernetes/cfg/kube-proxy

ExecStart=/mnt/kubernetes/bin/kube-proxy\

${KUBE_LOGTOSTDERR}\

${KUBE_LOG_LEVEL}\

${NODE_HOSTNAME}\

${KUBE_MASTER}

Restart=on-failure

[Install]

WantedBy=multi-user.target

11、启动服务

# systemctl daemon-reload

# systemctl restart kubelet

# systemctl restart kube-proxy

k8s搭建就此全部完成了。工作节点和管理节点配置,均可配置成集群模式,配置方式一样,按上面的步骤在每台服务器上都执行一遍,注意所有配置文件中的ip地址需要修改成每台服务器上的自身IP地址即可。验证是否安装成功。只需要运行命令测试一下:

# echo "export PATH=$PATH:/mnt/kubernetes/bin" >> /etc/profile

# source /etc/profile

# kubectl get nodes

看到返回了节点的信息并且是ready状态,就表示k8s搭建成功

12、dashboard安装

首先从官网下载yaml文件,但需要做一些修改,内容如下:

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ------------------- Dashboard Secret ------------------- #

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kube-system

type: Opaque

---

# ------------------- Dashboard Service Account ------------------- #

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

---

# ------------------- Dashboard Role & Role Binding ------------------- #

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

rules:

# Allow Dashboard to create 'kubernetes-dashboard-key-holder' secret.

- apiGroups: [""]

resources: ["secrets"]

verbs: ["create"]

# Allow Dashboard to create 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

verbs: ["create"]

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics from heapster.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:"]

verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard-minimal

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kube-system

---

# ------------------- Dashboard Deployment ------------------- #

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: k8s.gcr.io/kubernetes-dashboard-amd64:v1.10.1

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

#livenessProbe:

# httpGet:

# scheme: HTTPS

# path: /

# port: 8443

# initialDelaySeconds: 30

# timeoutSeconds: 30

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

# ------------------- Dashboard Service ------------------- #

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

ports:

- port: 443

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

type: NodePort

13、创建yaml文件并部署

# kubectl apply -f /home/kubernates-dashboard.yaml

不出意外应该是部署失败了,查看日志应该是报:failed pulling image “k8s.gcr.io/pause:3.1”。表示docker镜像拉取失败,镜像都是谷歌大佬的,网络过不去。我们可以下载国内的代理镜像,然后修改tag即可:

# docker pull mirrorgooglecontainers/pause:3.1

# docker tag docker.io/mirrorgooglecontainers/pause:3.1 k8s.gcr.io/pause:3.1

下载完成后,我们可以删除pod,重新来一次,也可以直接删除dashboard

# kubectl delete -f /home/kubernates-dashboard.yaml

# kubectl apply -f /home/kubernates-dashboard.yaml

不出意外应该又失败了,查看日志应该是报:failed pulling image “k8s.gcr.io/kubernetes-dashboard-amd64:v1.10.1”

又是谷歌大佬的镜像下不下来,好了,问题是一样的,解决方法也是一样的

# docker pull mirrorgooglecontainers/kubernetes-dashboard-amd64:v1.10.0

# docker tag mirrorgooglecontainers/kubernetes-dashboard-amd64:v1.10.0 k8s.gcr.io/kubernetes-dashboard-amd64:v1.10.1

重新来一遍,不出意外这下要成功了

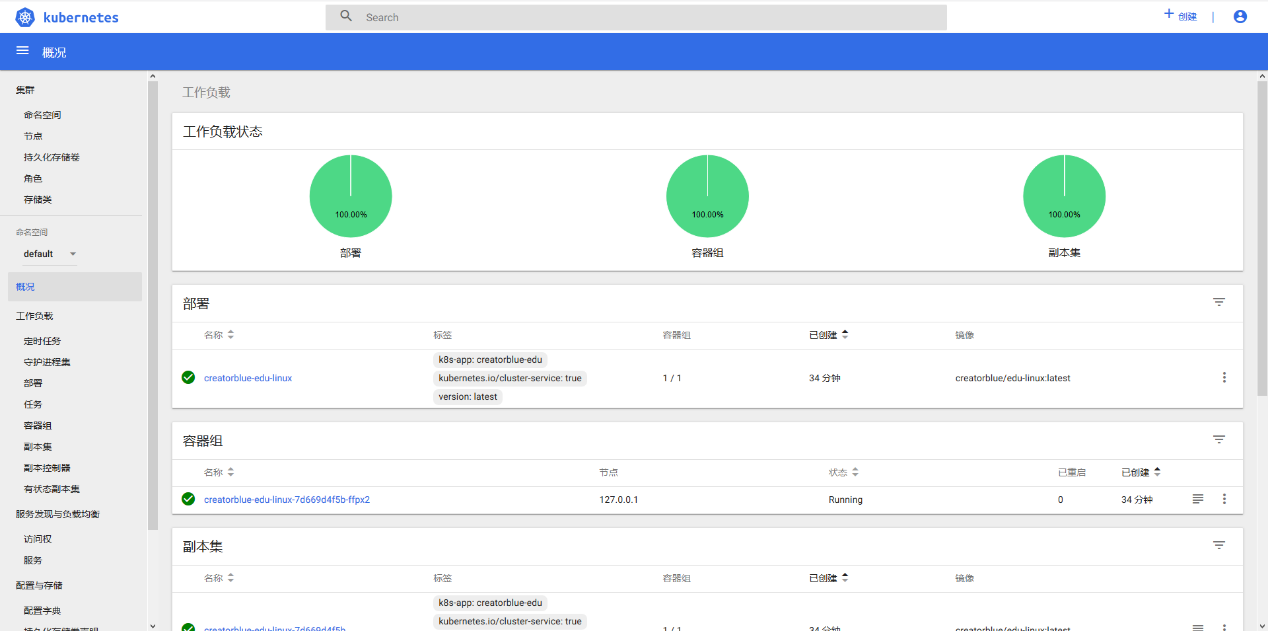

执行命令查看一下服务暴露端口号

# kubectl get svc -A -o wide

看到如下内容就表示dashboard在以节点端口号32402提供服务

kube-system kubernetes-dashboard NodePort 10.20.0.253 443:32402/TCP 77m k8s-app=kubernetes-dashboard

执行命令查看一下服务是否运行正常

# kubectl get pod -A

看到如下内容就表示dashboard工作正常

kube-system kubernetes-dashboard-c7f945c8-djwfk 1/1 Running 0 79m

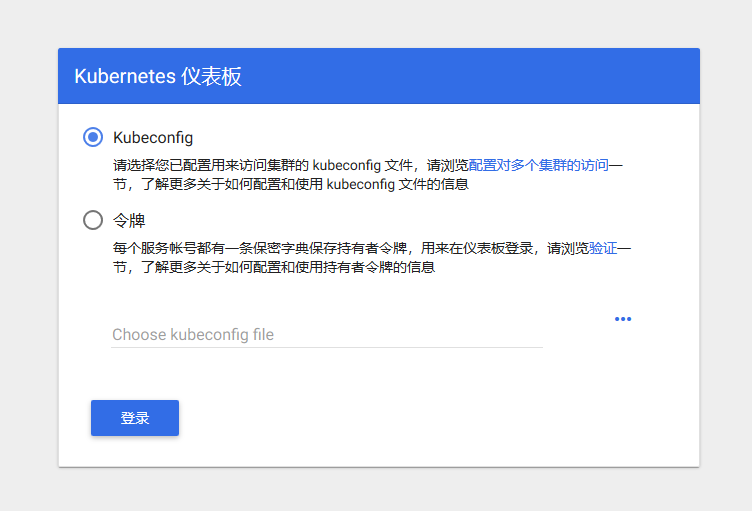

接下来就可以在浏览器上访问dashboard去管理整个k8s了

访问地址:https://127.0.0.1:32402/#!/login

效果图如下:

这时候我们发现登录页面有两个选择,一个是以授权文件的方式访问,一个是以token密钥的方式访问,但是这些东西从哪来呢。简单的方式就是通过token来。Token怎么获取。在服务器管理节点上执行命令:

# kubectl get secrets -A

找到dashboard-token项,执行以下命令,以下名字以实际为准

# kubectl describe secrets -n kube-system kubernetes-dashboard-token

这时就会返回一个128位的token,我们复制他到浏览器登陆页面上粘贴,然后就可以登陆了

14、常用命令汇总

各项服务的启动

管理节点:

# systemctl restart etcd

# systemctl restart kube-apiserver

# systemctl restart kube-controller-manager

# systemctl restart kube-scheduler

工作节点:

# systemctl restart docker

# systemctl restart kubelet

# systemctl restart kube-proxy

如启动有问题,可实时观察日志文件

# tail -f /var/log/messages

k8s使用

# kubectl get pod -A -o wide

# kubectl get deployment -A

# kubectl get svc -A

# kubectl get secrets -A

对应的删除,查看,日志命令

# kubectl delete pod **

# kubectl describe pod **

# kubectl logs **

通过yaml文件部署

# kubectl apply -f **.yaml

删除部署

# kubectl delete -f **.yaml

修改实例配置的副本数量

# kubectl scale deployment demo --replicas=3

暴露服务

# kubectl expose deployment demo --port=8080 --type=LoadBalancer